The Era When All Humans Do

Is Say "LGTM"

My name is Tyler Oakes. I was a programmer at a startup.

One morning I booted my machine and saw a Slack message from the founder.

Founder: "That thing is blowing up. Can we ship one?"

That thing. Right. Slightly annoying, but my overnight batch was still running, so I had a window.

I sat down and did my morning ritual: voice warmups. Clear pronunciation improved model parsing, or at least I believed it did.

I Googled "that thing," fed the latest papers and launch threads into a frontier model, and got the summary: an AI productivity layer that supposedly makes other AIs 10x better, with arXiv backing and endless benchmark screenshots. I did not fully understand it. Nobody did. Everyone wanted it anyway.

"Build this." A URL appeared.

I went to the restroom, grabbed canned coffee from the corner store, played some instrumental track from Spotify that I had heard a hundred times but still couldn't name, then watched animal clips for fifteen minutes. One was a hippo colliding with a compact car on an Indian highway. Internet quality control remains undefeated.

Then: notification ping.

Task complete. Implementation summary follows.

The AI listed changes in a neutral tone, like reading weather. No pride, no drama, just diff, tests, and rollout notes. I approved.

After that, CI parallelized the checks, three separate frontier models reproduced the results, performance gates passed, E2E passed, and the branch auto-merged before I finished my coffee. I shared staging with the founder. He voiced strategic concerns for ten minutes, then ended with, "Let's release and see user reaction," which means, "Release."

Day two: staging effectively became production. Comms and outbound were automated. The system sent emails down a ranked list and posted machine-readable updates to companies whose intake was MCP-native. I did not read the replies.

A small marketing budget got approved. Ads went live. Smaller creators started posting clips. One popped off. Traffic jumped. Then alerts fired: crawler waves hit docs and endpoints all at once, a short burst that looked suspiciously like coordinated probing.

A few errors appeared. The AI self-healed the obvious ones. I trust automation for small fires, so I ignored noise and opened the dashboard. Red tiles. I clicked one, skimmed a dense report, and typed one line: "Optimize this."

Twenty minutes later, something had been optimized. Key metrics jumped around seventy percent. Lots of green. A pull request showed +3,000 lines and -18,000 lines. Looked dramatic, therefore probably good. Then I added my second line: "Prevent recurrence."

A regression suite appeared. I did not inspect it. I approved it. Day six of development.

Founder: "Feels pretty good."

Me: "Yeah."

Our feature landed on the product page index. On day seven, traffic collapsed. A major tech company released the same thing at much higher quality, with deep integrations we couldn't match. Yesterday's "crawler burst" had likely been behavioral reconnaissance.

With our budget, there was no counterpunch. We withdrew.

Day seven ended with no hope, only vacuum. Still, I told myself it counted as progress: we built an idea worth copying. I took that as proof I had leveled up.

Then I updated my resume and started applying. My name is Tyler Oakes. I was a programmer with seven intense days of startup experience.

What every company was hiring for, meanwhile, was something called an "Orchestrator."

Orchestrator. Like a conductor. What are you conducting? An orchestra? I can't play an instrument.

But when I looked closer, I saw that the company — that company, the one that cloned our product in five days and shipped it on the sixth and killed us on the seventh — was hiring Orchestrators by the hundreds. I looked at the compensation and blinked. Then I looked again. Five times my last salary. I counted the digits. They were all there. My startup salary was just that low, maybe. Either way, I applied.

The interviews were strange.

First round. The person on the screen didn't ask me a single technical question. No LeetCode. No system design. No whiteboard. No "tell me about a time you resolved a merge conflict at scale." None of it. Instead:

"What's something you've been obsessed with recently? Outside of work."

I told them about a forty-five-minute video essay I'd watched about why the baggage carousel at SJC goes counterclockwise. I watched it twice.

The interviewer nodded thoughtfully. "So you're naturally curious."

Am I?

Second round. Different interviewer.

"Can you handle long stretches where nothing is happening?"

I told them about the time a batch job ran for forty-eight hours straight and I had to monitor it. The interviewer looked impressed.

"Patience. That's rare."

I was asleep for most of it.

Third round.

"Would you describe yourself as agreeable?"

Agreeable. I'm the guy who, when the founder said "ship it," said "yep" and shipped it. I am the human embodiment of agreement. I am the living manifestation of "sounds good."

"Yes."

Final round. A VP showed up.

"Do you trust your own taste?"

I froze. Taste. What is my taste? I'm the guy who listens to the same lo-fi playlist every day and doesn't know a single song title. My taste?

But then I thought about those seven days. I remembered clicking through the dashboard. Green items: skip. Red items: click. "Can you optimize this?" "Make sure this doesn't happen again." I did that, and things got better. For six of those seven days, things got better. If that's not taste, what is?

"Yes."

I got the job. Through four rounds of interviews, no one asked me to write a single line of code.

On my first day, they showed me the Factory.

Not a physical factory. It lived on screens. Specifically, it was a fully autonomous product development pipeline. Internally, everyone just called it "The Factory."

My onboarding buddy was three months in and used to be a graphic designer. Not even an engineer. She said:

"First rule: do nothing."

Do nothing. My specialty.

She pulled up the dashboard. Multiple product lines were flowing through it. Research. Design. Implementation. Testing. Deployment. Marketing. Analytics. All running automatically. There wasn't a single human avatar anywhere on the screen.

"Here's last night's activity log."

She scrolled. At 2 a.m., the Factory had scraped three papers from arXiv, generated twelve feature hypotheses, prototyped four of them, pushed two to staging, and shipped one to production. By 6 a.m. the results were in: that one was killed, and a feature from a different pipeline from the previous day was promoted instead. All of it happened while we were sleeping.

"Who did this?" I asked.

"Nobody. The Factory."

And right then, I understood something. That competitor release — the one that cloned our product on day six and destroyed us on day seven — wasn't made by humans. The Factory detected our launch on Product Hunt, autonomously decided we were a competitive threat, spun up a development pipeline, and shipped a better version before our Slack had finished celebrating.

Even the traffic spike that took down our servers on day five? Turns out that was the Factory's "competitive intelligence module" running some kind of automated load analysis. Technically legal. Barely.

I wasn't beaten by people. I was run over by a factory.

That stung a little. But honestly? It was also kind of a relief.

The more I learned about the Factory, the more my assumptions crumbled.

It wasn't just development. The Factory autonomously built differentiation features. It filed patents — on its own. It registered trademarks. It even wrote research papers and submitted them to conferences. … Patents? The thing I'm worst at. Apparently a parallel legal AI ran alongside the Factory, checking intellectual property law across every jurisdiction in real time. Last month alone, the Factory filed four hundred and seventy-eight patent applications. The human IP team was three people, and their entire job was to look at the AI's output and pick the ones that were actually worth something. Not unlike my job, when you think about it. Just say "LGTM" and move on.

And the competitive response. The Factory monitored the market continuously. When a competitor appeared, counter-features shipped within twenty-four hours. Simultaneously, it pushed a hundred and fifty product variations per day, kept the winners, killed the rest. Every day. Yesterday's product was already a different company from today's.

One morning, a legacy enterprise player tried to clone one of our features. The Factory detected the intent — not even the launch, the intent — and preemptively shipped three differentiators, filed three patents, and registered two trademarks to block them. By the time I woke up, there was a single notification in my feed: "Competitive response complete. Details →"

I didn't click the link. The indicator was green.

So what does an Orchestrator actually do?

Answer: mostly, watch.

My typical day goes like this. Wake up — I'm remote, everyone's remote. Open the dashboard. If it's mostly green, I drink my coffee. If there's red, I drink my coffee while looking at it.

Sometimes the Factory gets too aggressive. It packs too many features into a release trying to bury a competitor, and the UX falls apart. Churn ticks up. Numbers start going yellow. I type:

"Dial it back."

The Factory pulls down the aggression parameter and switches to a UX-first mode.

Other times it gets too conservative. Clings to the existing user base, optimizes for retention, stops exploring new markets. Everything's green, but nothing's moving. That's when I say:

"You can push a little harder."

That's the Orchestrator's job. Human taste, calibrating the AI's balance between offense and defense. Now I understand why the interviews were all about curiosity, patience, taste, and agreeableness.

Oh, and: "That seems like it could be automated, so automate it." And usually, it gets automated.

There's one more part of the job. Responsibility. A couple of years ago, Congress passed a law that said a human has to be the final accountable party for any autonomous system's decisions. It's like LASIK. These days the machine does the whole procedure — a multimillion-dollar laser guided by software — and the doctor just presses a button. Recently they even shifted post-op liability from the doctor to the device manufacturer. That doctor does nothing but press a button, and they make four hundred grand a year.

But think about it. That button press is where the responsibility lives. Hundreds of millions of lines of code and billions of parameters, and at the end of the chain, one human being says "LGTM." That's worth a salary.

I used to say "LGTM" at my startup too. Back then it was free. Now the same four letters are worth five times my old pay. The world is broken. But the chair is comfortable.

On a morning in my second month, an internal news alert popped up.

M&A completed.

I looked at the acquisition target and thought I was hallucinating.

It was my old startup.

Why? They had already killed it. The product was dead. Users were gone. The servers were probably off. What value was left?

I messaged my former CEO. He was supposed to be in bankruptcy proceedings, but he sounded euphoric.

"Tyler, listen. Five billion yen. Five. Billion."

Five billion.

I went silent.

Then I heard the reason and went even quieter.

Our old company still held two patents obtained in the pre-AI era by a veteran engineer who had already retired. Human-brain inventions, human-hand implementation, human explanation at the patent office. Plus one more asset: the copyright for a goofy mascot our CEO drew as a hobby and used as an internal character.

That was it.

The Factory can build anything: code, features, papers, trademarks, even new algorithms. But it can't duplicate existing patents and copyrights without legal risk. If they copied them, lawsuits would hit, and the stock would move. For a company that size, five billion yen is rounding error; litigation risk is not.

So their default M&A strategy is simple: buy everything. Build what can be built. Buy what cannot be replicated — the residue of human creation left behind as IP. Five billion was just "materials cost" to the Factory.

My old CEO laughed and cried at the same time. "That doodle is worth five billion, Tyler. That doodle."

He started scouting tropical islands.

I stared out the window. Gray sky. Same company, different outcomes: he got billions, I got salary. The world is unfair. Still, I wasn't bitter. He wasn't saved by charisma, and I wasn't defeated by skill. The winners were a retired engineer's decade-old patents and a late-night iPad doodle.

Things humans make with their own hands are the only things that remain.

Then one day, the world shook.

February 2026. News spread that an Austrian developer had joined OpenAI.

Peter Steinberger.

Everyone in software knew the name. He started PSPDFKit alone, spent more than a decade building it, then exited for one hundred million euros. A one-person start that reached a billion users.

After the exit, though, he broke. He had poured 200% of himself into the company for thirteen years. It was his identity. Once it was gone, he said, nothing was left. Money was there. Time was there. Meaning wasn't.

That feeling sounded familiar. Day seven. My own void.

Then he found a spark again through AI. At first, it was just a weekend side project: a personal assistant for himself. It sorted email, managed his calendar, checked in flights, booked restaurants. He built it because he wanted it. He called it ClawBot.

Anthropic complained that the name sounded too close to Claude, so he renamed it MoltBot. In the five-second gap during the rename, crypto scammers hijacked his accounts. "It's over," he said. He almost killed the project. He didn't. He renamed it again: OpenClaw.

After that he went all in. All-nighters, constantly. Screenshots said six thousand six hundred commits in a single January. Running four to ten agents in parallel, barely writing code by hand anymore, mostly watching code stream by. His GitHub followers passed thirty thousand. His stars approached two hundred thousand. In roughly two months.

He got offers from everywhere. He chose OpenAI.

The compensation number was never confirmed. But in a year where OpenAI could buy companies for billions of dollars, even a rumored ten-figure package no longer sounded impossible. That's the scary part.

Sam Altman once predicted AI would let one person build a billion-dollar company. The prediction came true, and then got hired.

But what hit me hardest was this: the first billion-dollar opportunity came to someone who was already rich.

Unfair? Maybe. But it wasn't magic. It was thirteen years of building, then collapse, then void, then climbing out, then staying up at 3 a.m. watching code flow, getting hijacked, almost quitting, and still not quitting.

Not just talent. Not just taste. Energy, stamina, vision, and the will to climb out of emptiness.

I read all this while drinking coffee in front of my dashboard. I know that emptiness. Day seven morning, staring at zero traffic. The difference between Peter and me wasn't talent. It was what happened after the void.

He turned a weekend project into a global shift.

I changed jobs.

Then the Japanese government moved.

Universal basic income. 300,000 yen per month. For everyone.

It sounded utopian, but the funding model was strangely practical.

The government introduced what people called a "human dignity tax." The official name was something like "AI Utilization Social Stabilization Contribution," but no one used that. News called it the dignity tax. Bars called it robot tax. Social media called it the Yoshi tax.

In plain terms: companies pay based on AI usage with a correction factor. The more a company earns from AI while employing fewer humans, the more it contributes. Companies like mine.

The key design choice was this: none of that money goes into general government spending. One hundred percent goes into a dedicated UBI pool. Politicians can't touch it. The monthly amount is automatically adjusted each year by population and pool size. If population drops, per-person distribution rises. As AI spreads, the pool grows. It's basically a Factory-style feedback loop.

Companies protested at first. But even after paying the tax, AI-driven savings were still much larger. In practice, "AI is cheaper than humans even after tax" accelerated automation further. Ironic, but numbers don't care.

Society split in two.

People living on 300,000 yen looked calm at first. Tight in major cities, comfortable in rural areas. Late mornings, walks, library afternoons, cheap drinks with friends. No production. Minimal consumption. Peaceful.

But only at first.

The first six months felt like freedom. Every day was Sunday. Around one year in, the mood shifted. The void arrived.

Same pattern as Peter: money and time don't automatically produce meaning.

Then, little by little, people started making things. Painting, writing fiction, studying philosophy, learning instruments, growing food. Not for money. Just to create. Humans are not built to endure pure idleness.

The UBI pool stabilized over time. Population declined, AI use increased, payouts inched upward, and people who couldn't tolerate emptiness began countless small activities at the edges of the economy. Maybe UBI wasn't about GDP at all. Maybe it was about saving humans from the void.

The world of people who kept working went feral.

Average orchestrator income reached around forty million yen per year. Top-tier earners made hundreds of billions. The gap from the UBI layer was the largest in history.

Yet society stayed relatively stable because the floor was guaranteed. No hunger. Without hunger, people tolerate inequality more than you'd think. Jealousy stayed. Riots didn't.

The composition of workers changed, too. In the old era, many worked because they had to. Now everyone still working had chosen to. They could have lived on UBI and didn't.

People with curiosity, ambition, restlessness — people who couldn't stay still.

Peter was exactly that. He had money and time already, and still watched code flow at 3 a.m. A "one guy messing with bots" phase became a world-shifting product.

A world powered by desire and dreams. People who don't want to work don't have to. That's fine. But if your internal fire catches, you might end up buying an island.

My name is Tyler Oakes. I'm an orchestrator.

Every morning I open the dashboard. If it's green, coffee. If it's red, coffee plus judgment. Sometimes "push harder." Sometimes "ease up." Sometimes "automate that." Then I take responsibility.

My pay is five times my old salary and one ten-thousandth of my old CEO's exit.

But lately, there is one thing I do by my own will.

Every night, I open a notebook and write ideas: "Wouldn't this be interesting?" No one sees it. I don't feed it to the Factory.

Why?

Because unborn ideas are still uncopyable. Patents, copyrights, brands — in the end, lasting value starts in a human head. My old startup got rescued by decade-old human patents and a doodle drawn at midnight. Peter became a giant because he built what he personally wanted on a weekend.

My notebook has things no one has seen. At least I think it does. Delusion? Maybe. Or the seed of five billion yen. Or one hundred fifty billion. I don't know.

Peter didn't know either. At 3 a.m., building a personal assistant, he probably wasn't thinking "this will reshape the world."

If there's a difference, it's this: he shipped his. Mine is still trapped in paper.

At least, until today.

One night, the same instrumental track came on again. I've heard it a hundred times. I still don't know the title.

I opened a fresh page and wrote, for the first time, a product name.

Product: ██████

I pushed it to GitHub. Stars: zero.

Peter's repo also started at zero.

My name is Tyler Oakes.

I used to be a programmer. Now I'm an orchestrator.

But tomorrow, I might be something else.

This time, I won't let it end in seven days.

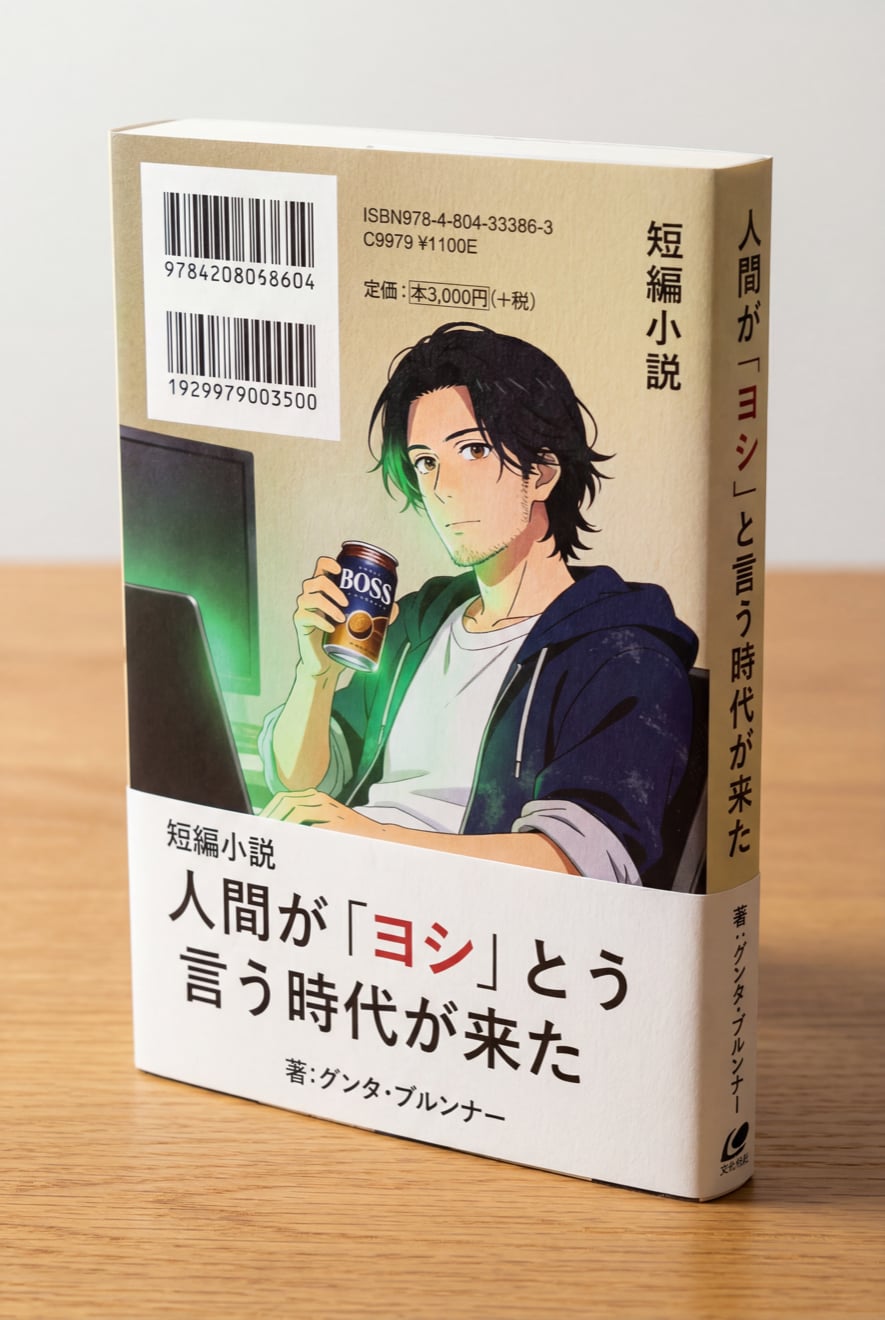

You just read the full English web edition.

The original Japanese edition is available on Amazon Japan.

View on Amazon Japan →Quoting, sharing, and discussing this story is encouraged.